News

Showcasing Project Results in 2 HiPEAC’25 Workshops

The 2025 HiPEAC conference successfully brought together more than 750 experts in in computer architecture, programming models, compilers and operating systems for general-purpose, embedded and cyber-physical systems. This annual event is a premier forum for...

Safety patterns for AI-based systems

Through the SAFEXPLAIN project, Ikerlan has analyzed strategies and developed specific solutions to implement safety patterns in systems that incorporate AI components. Learn more about the 4 key safety mechanisms used in the reference safety architurecture pattern.

Coming Soon! SAFEXPLAIN technologies converge in an open demo

As the project enters into its 27th month, releases for most technologies are already available and undergoing the last steps towards completing their integration.To demonstrate the SAFEXPLAIN approach, project partners are working towards creating an open source demo...

Developing Scenario Catalogues for SAFEXPLAIN Case Studies: the Railway Case

Part of Exida developnet SRL’s work in the SAFEXPLAIN project includes developing a Catalogue of Scenarios and Test Cases for each case study. These scenarios are performed in either a real or simulated testing environment.

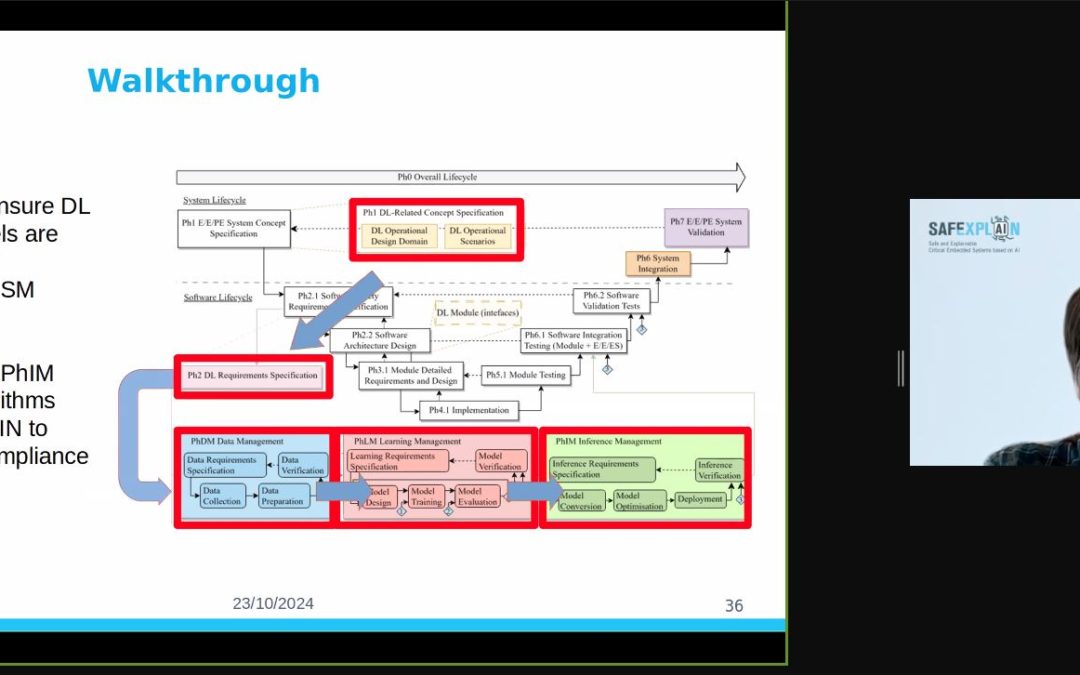

RISE Webinar Highlights XAI for Systems with Functional Safety Requirements

Dr Robert Lowe, Senior Researcher in AI and Driver Monitoring Systems from the Research Institutes of Sweden discussed the integration of explainable AI (XAI) algorithms into the machine learning (ML) lifecycles for safety-critical systems, i.e., systems with...

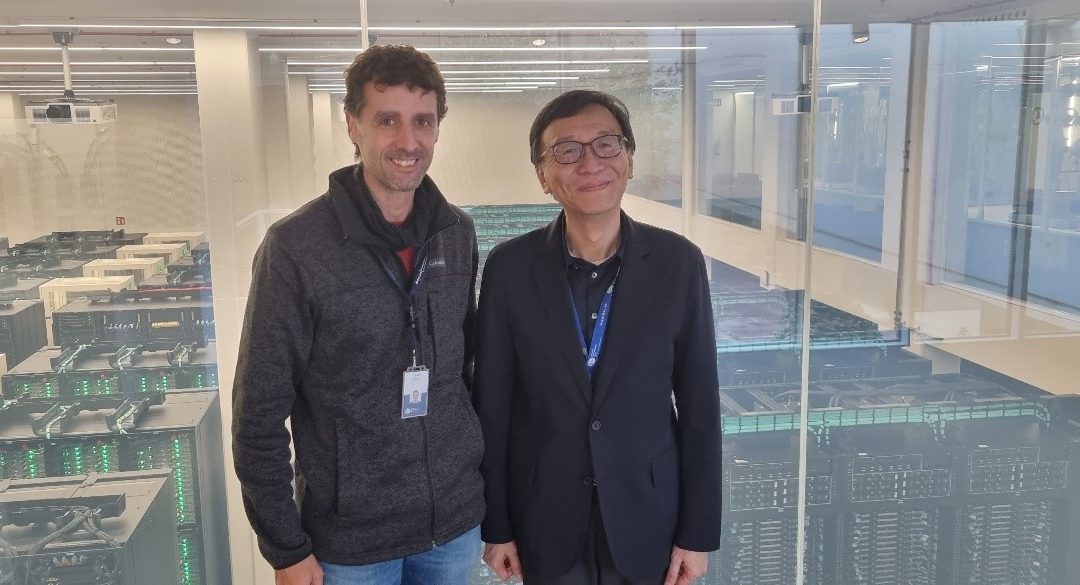

BSC receives visit from delegate from Taiwanese Institute for Information Industry

Figure 1: Photo by Francisco J. Cazorla, BSC representative also attending this meeting The SAFEXPLAIN project was thrilled to receive the visit of Stanley Wang, Director of the Digital Transformation Research Institute, part of the Institute for Information Industry...

Contributing to EU Sovereignty in AI, Data and Robotics at the ADRF24

SAFEXPLAIN participated as a Silver Sponsor of the 2024 AI, Data and Robotics Forum, which took place in Eindhoven, Netherlands from 4-5 November 2024. This two-day event gathered leading experts, innovators policymakers and enthusiasts from teh AI, Data and Robotics...

Consortium sets course for last year at Barcelona F2F

Members of the SAFEXPLAIN consortium met in Barcelona, Spain on 29-30 October 2024 to discuss the project's process at the end of the second year of the project. With one year to go, project partners used this in-person meeting to close loose ends and ensure that...

Second IAB Meeting Confirms SAFEXPLAIN Advancements at Start of Year 3

The SAFEXPLAIN project met with members of its industrial advisory board on 03 October 2024 to present project advancements at the beginning of the project’s third and final year. This meeting was important for ensuring the project’s research outcomes align with real-world industry needs.

RISE explains XAI for systems with Functional Safety Requirements

The SAFEXPLAIN project is analysing how DL can be made dependable, i.e., functionally assured in critical systems like cars, trains and satellites. Together with other consortium members, RISE has been working on establishing principles for ensuring that DL components, together with required explainable AI supports, comply with the guidelines set forth by AI-FSM and the safety pattern(s).