SAFEXPLAIN: From Vision to Reality

AI Robustness & Safety

Explainable AI

Compliance & Standards

Safety Critical Applications

THE CHALLENGE: SAFE AI-BASED CRITICAL SYSTEMS

- Today’s AI allows advanced functions to run on high performance machines, but its “black‑box” decision‑making is still a challenge for automotive, rail, space and other safety‑critical applications where failure or malfunction may result in severe harm.

- Machine- and deep‑learning solutions running on high‑performance hardware enable true autonomy, but until they become explainable, traceable and verifiable, they can’t be trusted in safety-critical systems.

- Each sector enforces its own rigorous safety standards to ensure the technology used is safe (Space- ECSS, Automotive- ISO26262/ ISO21448/ ISO8800, Rail-EN 50126/8), and AI must also meet these functional safety requirements.

MAKING CERTIFIABLE AI A REALITY

Our next-generation open software platform is designed to make AI explainable, and to make systems where AI is integrated compliant with safety standards. This technology bridges the gap between cutting-edge AI capabilities and the rigorous demands for safety-crtical environments. By joining experts on AI robustness, explainable AI, functional safety and system design, and testing their solutions in safety critical applications in space, automotive and rail domains, we’re making sure we’re contribuiting to trustworthy and reliable AI.

Key activities:

SAFEXPLAIN is enabling the use of AI in safety-critical system by closing the gap between AI capabilities and functional safety requirements.

See SAFEXPLAIN technology in action

CORE DEMO

The Core Demo is built on a flexible skeleton of replaceable building blocks for Interference, Supervision or Diagnoistic components that allow it to be adapted to different secnarios. Full domain-specific demos are available in the technologies page.

SPACE

Mission autonomy and AI to enable fully autonomous operations during space missions

Specific activities: Identify the target, estimate its pose, and monitor the agent position, to signal potential drifts, sensor faults, etc

Use of AI: Decision ensemble

AUTOMOTIVE

Advanced methods and procedures to enable self-driving carrs to accurately detect road users and predict their trajectory

Specific activities: Validate the system’s capacity to detect pedestrians, issue warnings, and perform emergency braking

Use of AI: Decision Function (mainly visualization oriented)

Safety for AI-Based Systems

As part of SAFEXPLAIN, Exida has contributed a methodology related to a verification and validation (V&V) strategy of AI-based components in safety-critical systems. The approach combines the two standards ISO 21448 (also known as SOTIF) and ISO 26262 to address...

PRESS RELEASE: SAFEXPLAIN Unveils Core Demo: A Step Further Toward Safe and Explainable AI in Critical Systems

Barcelona, 03 July 2025 The SAFEXPLAIN project has just publicly unveiled its Core Demo, offering a concrete look at how its open software platform can bring safe, explainable and certifiable AI to critical domains like space, automotive and rail. Showcased in a...

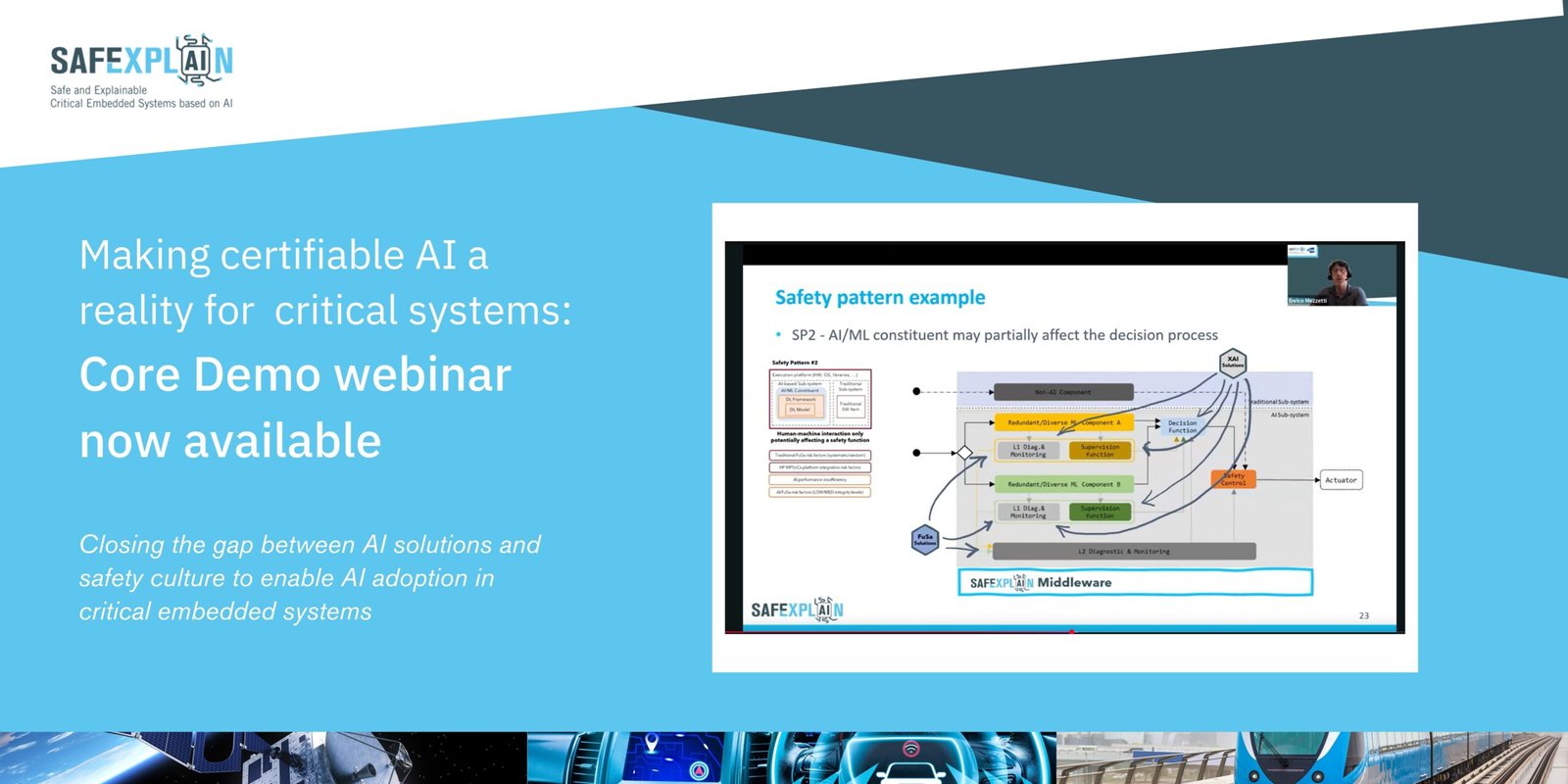

Core Demo Webinar- Making certifiable AI a reality for critical systems

Now available!

The project’s Core Demo is a small-scale, modular demonstrator that highlights the platform’s key technologies and showcases how AI/ML components can be safely integrated into critical systems.

Webinar- Putting it together: The SAFEXPLAIN platform and toolsets

The third webinar in the SAFEXPLAIN webinar series will share the novative infrastructure behind the AI-FSM and XAI methodologies. Participants will gain insights into the integration of the proposed solutions and how they are designed to enhance the safety, portability and adaptability of AI systems.

SAFEXPLAIN to Participate in 2025 HiPEAC Conference

Join us for two workshops at this year’s HiPEAC conference. Partners IKERLAN and RISE will be participating in workshops: MCS: Mixed Critical Systems – Safe Intelligent CPS and the development cycle WS and the Women@HPC MAR WHPC chapter: Building the diversity continuum in cutting-edge technologies. These workshops will take place during the second day of the workshop.

SAFEXPLAIN @ AI, Data, Robotics Forum

SAFEXPLAIN is happy to support the 2024 edition of the AI, Data and Robotics Forum. This two-day event is helping to unite the AI, Data and Robotics (ADR) community to support responsible innovation. The theme of this year's forum is "European Sovereignty in AI, Data...