Written by Irune Agirre, Dependable Embedded Systems, IKERLAN / Basque Research and Technology Alliance (BRTA)

Ensuring the safety of systems that rely on Artificial Intelligence (AI) is a complex task that has been widely researched in recent years. The safety of AI involves multiple disciplines and the collaboration of experts in several fields, such as, computer science, mathematics, statistics, engineering, and industrial stakeholders among others. SAFEXPLAIN aims to keep a continuous dialogue among Deep Learning (DL), functional safety, platform experts and industrial end-users to collaborate towards the safety assurance of AI-based critical systems.

In its broadest sense, AI safety means ensuring that the operation of an AI system does not contain any unacceptable risks that could potentially cause harm to humans or the environment. To this end, it is essential to ensure that the AI system operates reliably, that the potential consequences of its unintended behavior are mitigated and that it is possible to explain how the AI system arrived at a particular decision.

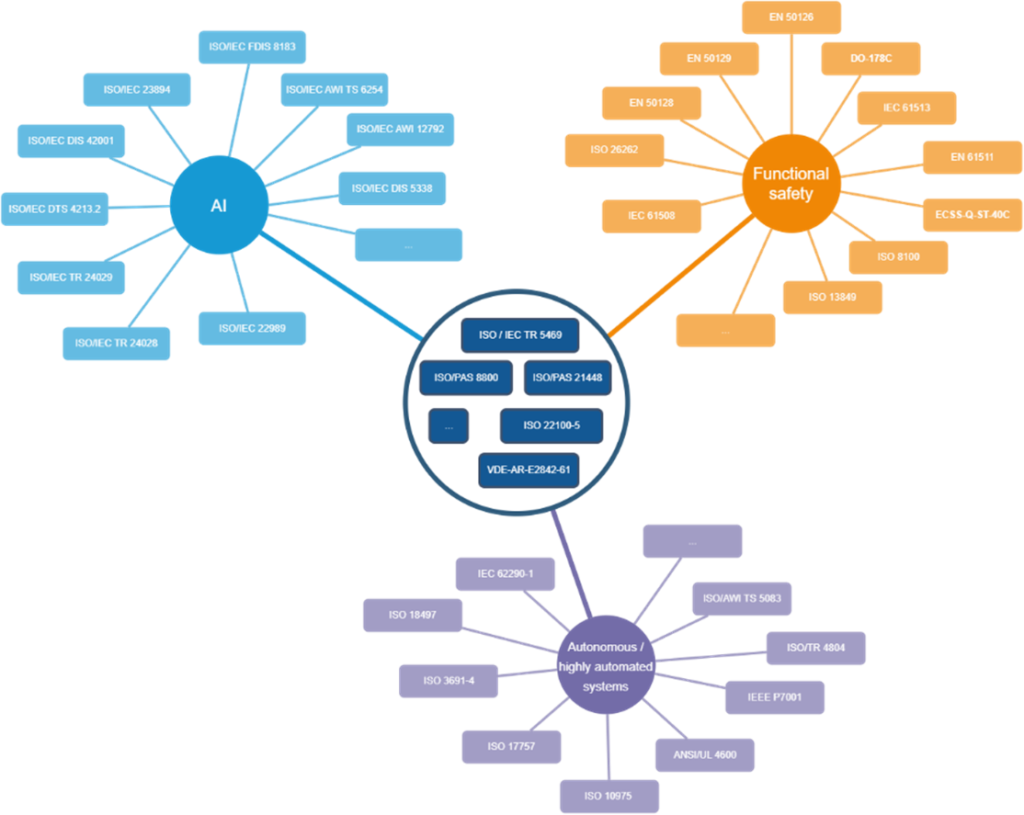

Although regulations are still relatively premature, the standardization landscape is expanding. Several emerging standards and technical reports aim to provide guidance in the assurance process. In its initial phase, SAFEXPLAIN surveyed existing standards and initiatives to obtain background knowledge upon which it could build the project’s foundation. Figure 1 illustrates the wide spectrum of available and emerging standards in the topics related to SAFEXPLAIN:

- Functional safety: define the requirements for the development of safety-related electrical and/or electronic systems to avoid unacceptable risks caused by the malfunctioning of the electrical/electronical system.

- Artificial Intelligence: deal with different aspect of artificial intelligence, such as, lifecycle considerations, data management, explainability, transparency, risk management, privacy, security through many existing and ongoing standards

- Autonomous and/or highly automated systems: many domains adopt solutions that have different levels of automation and varying degrees of human collaboration. To this end, some transportation and industrial domains have already defined standards, technical reports, and guidelines to deal with such automation, and some of them explicitly refer to AI-based solutions.

- Combinations of previous standards: it is envisioned that ongoing standards will jointly cover both functional safety and artificial intelligence (ISO/IEC TR 5469 5469 [1] and ISO PAS 8800 [2]). In addition, ISO/PAS 21448 [3] establishes the procedures and requirements for guaranteeing Safety of the Intended Functionality (SOTIF), which is complementary to functional safety and is highly relevant for autonomous systems so that they can deal with hazards caused by functional insufficiencies.

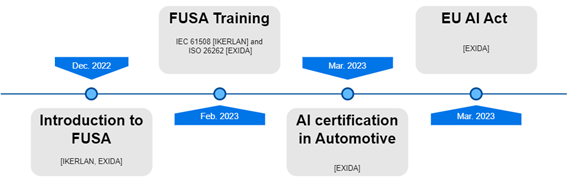

In addition to the advancements in the standardization framework, the adoption of AI technologies in the critical domain requires the continuous collaboration of academia, industry, governments, and standardization bodies for crafting technologies, standards, and industrial best-practices [4]. In this sense, during last months, SAFEXPLAIN has been organizing a series of internal workshops to bring DL and platform experts and industrial partners closer to traditional functional safety processes and technical solutions and emerging AI-safety initiatives.

References

| [1] | ISO/IEC TR 5469 – Artificial intelligence – Functional safety and AI systems, Geneva, Under development. |

| [2] | ISO/AWI PAS 8800 Road Vehicles — Safety and artificial intelligence, Under Development. |

| [3] | International Organization for Standardization, ISO 21448: Road vehicles — Safety of the intended functionality, Geneva, 2022. |

| [4] | J. Perez-Cerrolaza, F. J. Cazorla and J. Abella, “Uncertainty Management in Dependable and Intelligent Embedded Software,” Computer, vol. 56, no. 3, pp. 114-117, March 2023 (DOI: https://doi.org/10.1109/MC.2023.3235093). |