Introducing SAFEXPLAIN:

Safe and Explainable Critical Embedded Systems based on AI

Objectives

To improve the explainability and traceability of DL components

To provide clear safety patterns for the incremental adoption of DL software in Critical Autonomous AI-based Systems (CAIS)

To integrate the SAFEXPLAIN libraries with an industrial system-testing toolset

To create architectures of DL components with quantifiable and controllable confidence, and that have the ability to identify when predictions should not be released based on applicability’s scope or security concerns

To design, implement, or update selected representative DL software libraries according to safety patterns and safety lifecycle considerations, meeting specific performance requirements on relevant platforms

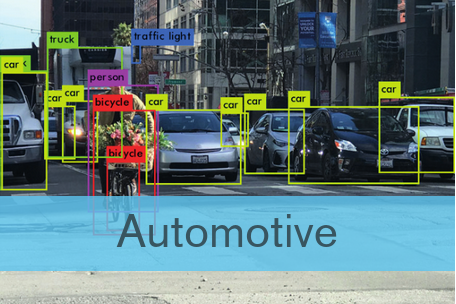

Deep Learning (DL) techniques are key for most future advanced

software functions in Critical Autonomous AI-based Systems (CAIS) in

cars, trains and satellites. Hence, those CAIS industries depend on their

ability to design, implement, qualify, and certify DL-based software

products under bounded effort/cost

Case studies

Railway: This case studies the viability of a safety architectural pattern for the completely autonomous operation of trains (Automatic Train Operation, ATO) using intelligent Deep Learning (DL)-based solutions.

Space: This case employs state-of-the-art mission autonomy and artificial intelligence technologies to enable fully autonomous operations during space missions. These technologies are developed through high safety-critical scenarios.

Safely docking a spacecraft to a target vehicle

The space scenario envisions a crewed spacecraft performing a docking manoeuvre to an uncooperative target (a space station or another spacecraft) on a specific docking site. The GNC system must be able to acquire the pose estimation of the docking target and of the spacecraft itself, to compute a trajectory towards the target and to send commands to the actuators to perform the docking manoeuvre. The safety goal is to dock with adequate precision and avoid crashing or damaging the assets.

Halfway through the project, RISE hosts consortium in Lund

SAFEXPLAIN consortium meets halfway through the project at RISE venue in Lund With the first 18 months of the project behind it, the SAFEXPLAIN consortium met in Lund from 16-17 April to discuss project status and next steps for the next 18 months. Great strides have...

A Tale of Machine Learning Process Models at Automotive SPIN Italia

Announcement from the Automotive SPIN ITALIA website The SAFEXPLAIN project will mark its presence at the Automotive SPIN Italia 22º Workshop on Automotive Software & System. Carlo Donzella from partner exida development will share insights into "A Tale of Machine...

Challenges and approaches for the development of Artificial Intelligence (AI)-based Safety-Critical Systems

Jon Perez Cerrolaza from SAFEXPLAIN was invited to give a presentation on the “Challenges and approaches for the development of Artificial Intelligence (AI)-based Safety-Critical Systems” at the Instituto Tecnológico de Informática (ITI). The talk was well received by around 25 researchs from the ITI and the Polytechnic University of Valencia who were interested in the SAFEXPLAIN perspective on AI, Safety & Explainability and Trustworthiness.