Introducing SAFEXPLAIN:

Safe and Explainable Critical Embedded Systems based on AI

Objectives

To improve the explainability and traceability of DL components

To provide clear safety patterns for the incremental adoption of DL software in Critical Autonomous AI-based Systems (CAIS)

To integrate the SAFEXPLAIN libraries with an industrial system-testing toolset

To create architectures of DL components with quantifiable and controllable confidence, and that have the ability to identify when predictions should not be released based on applicability’s scope or security concerns

To design, implement, or update selected representative DL software libraries according to safety patterns and safety lifecycle considerations, meeting specific performance requirements on relevant platforms

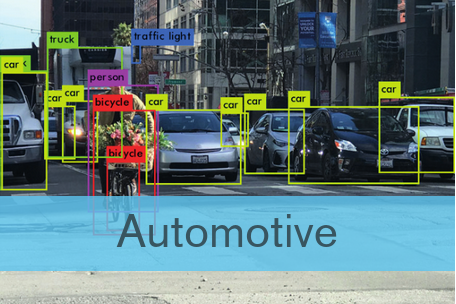

Deep Learning (DL) techniques are key for most future advanced

software functions in Critical Autonomous AI-based Systems (CAIS) in

cars, trains and satellites. Hence, those CAIS industries depend on their

ability to design, implement, qualify, and certify DL-based software

products under bounded effort/cost

Case studies

Railway: This case studies the viability of a safety architectural pattern for the completely autonomous operation of trains (Automatic Train Operation, ATO) using intelligent Deep Learning (DL)-based solutions.

Space: This case employs state-of-the-art mission autonomy and artificial intelligence technologies to enable fully autonomous operations during space missions. These technologies are developed through high safety-critical scenarios.

Integrating AI into Functional Safety Management

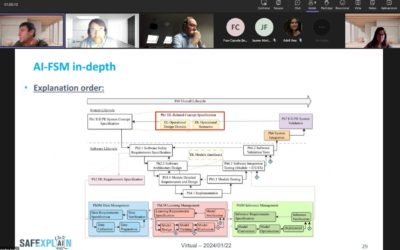

SAFEXPLAIN is developing an AI-Functional Safety Management methodology that guides the development process, maps the traditional lifecycle of safety-critical systems with the AI lifecycle, and addreses their interactions. AI-FSM extends widely adopted FSM methodologies that stem from functional safety standards to the the specific needs of Deep Learning architecture specifications, data, learning, and inference management, as well as appropriate testing steps. The SAFEXPLAIN-developed AI-FSM considers recommendations from IEC 61508 [5], EASA [6], ISO/IEC 5460 [3], AMLAS [7] and ASPICE 4.0 [8], among others.

Certification bodies weigh-in on SAFEXPLAIN functional safety management methodologies integrating AI

SAFEXPLAIN partners from IKERLAN and the Barcelona Supercomputing Center met with TÜV Rheinland experts on 22 January 2024 to share the project´s AI-Functional Safety Management (AI-FSM) methodology. This meeting provided an important opportunity for the project to present its work to an important player in safety certification.

Mobile World Congress 2024

The 2024 Mobile World Congress (MWC) was held in Barcelona from 26-29 February. Partner Barcelona Supercomputing Center (BSC) attended and presented SAFEXPLAIN project technology and held meetings with several industry players. Hosted by the GSMA, the MWC Barcelona...

HIPEAC Workshop: Mixed Critical Systems – Safe and Secure Intelligent CPS and the development cycle

This year the SAFEXPLAIN project will partake in this workshop lead by IKERLAN on mixed critical systems. The workshop will take place on 19 January from 10h-17:30h. The HiPEAC conference is the premier European forum for experts in computer architecture, programming...