SAFEXPLAIN: From Vision to Reality

AI Robustness & Safety

Explainable AI

Compliance & Standards

Safety Critical Applications

THE CHALLENGE: SAFE AI-BASED CRITICAL SYSTEMS

- Today’s AI allows advanced functions to run on high performance machines, but its “black‑box” decision‑making is still a challenge for automotive, rail, space and other safety‑critical applications where failure or malfunction may result in severe harm.

- Machine- and deep‑learning solutions running on high‑performance hardware enable true autonomy, but until they become explainable, traceable and verifiable, they can’t be trusted in safety-critical systems.

- Each sector enforces its own rigorous safety standards to ensure the technology used is safe (Space- ECSS, Automotive- ISO26262/ ISO21448/ ISO8800, Rail-EN 50126/8), and AI must also meet these functional safety requirements.

MAKING CERTIFIABLE AI A REALITY

Our next-generation open software platform is designed to make AI explainable, and to make systems where AI is integrated compliant with safety standards. This technology bridges the gap between cutting-edge AI capabilities and the rigorous demands for safety-crtical environments. By joining experts on AI robustness, explainable AI, functional safety and system design, and testing their solutions in safety critical applications in space, automotive and rail domains, we’re making sure we’re contribuiting to trustworthy and reliable AI.

Key activities:

SAFEXPLAIN is enabling the use of AI in safety-critical system by closing the gap between AI capabilities and functional safety requirements.

See SAFEXPLAIN technology in action

CORE DEMO

The Core Demo is built on a flexible skeleton of replaceable building blocks for Interference, Supervision or Diagnoistic components that allow it to be adapted to different secnarios. Full domain-specific demos are available in the technologies page.

SPACE

Mission autonomy and AI to enable fully autonomous operations during space missions

Specific activities: Identify the target, estimate its pose, and monitor the agent position, to signal potential drifts, sensor faults, etc

Use of AI: Decision ensemble

AUTOMOTIVE

Advanced methods and procedures to enable self-driving carrs to accurately detect road users and predict their trajectory

Specific activities: Validate the system’s capacity to detect pedestrians, issue warnings, and perform emergency braking

Use of AI: Decision Function (mainly visualization oriented)

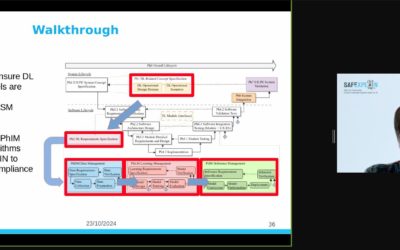

RISE Webinar Highlights XAI for Systems with Functional Safety Requirements

Dr Robert Lowe, Senior Researcher in AI and Driver Monitoring Systems from the Research Institutes of Sweden discussed the integration of explainable AI (XAI) algorithms into the machine learning (ML) lifecycles for safety-critical systems, i.e., systems with...

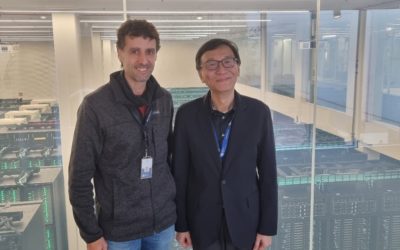

BSC receives visit from delegate from Taiwanese Institute for Information Industry

Figure 1: Photo by Francisco J. Cazorla, BSC representative also attending this meeting The SAFEXPLAIN project was thrilled to receive the visit of Stanley Wang, Director of the Digital Transformation Research Institute, part of the Institute for Information Industry...

Contributing to EU Sovereignty in AI, Data and Robotics at the ADRF24

SAFEXPLAIN participated as a Silver Sponsor of the 2024 AI, Data and Robotics Forum, which took place in Eindhoven, Netherlands from 4-5 November 2024. This two-day event gathered leading experts, innovators policymakers and enthusiasts from teh AI, Data and Robotics...

SAFEXPLAIN Partner to Give Keynote at CARS Workshop

SAFEXPLAIN will attend the 8th edition of the Critical Automotive Applications: Robustness & Safety Workshop on 8 April 2024. Partner Jon Perez Cerrolaza from Ikerlan will give the workshop keynote talk on “Artificial Intelligence, Safety and Explainability( SAFEXPLAIN) on day on of the workshop. SAFEXPLAIN will also participate in the workshop through its presentation on “AI-FSM: Towards Functional Safety Management for Artificial Intelligence-based Critical Systems”.

Mobile World Congress 2024

The 2024 Mobile World Congress (MWC) was held in Barcelona from 26-29 February. Partner Barcelona Supercomputing Center (BSC) attended and presented SAFEXPLAIN project technology and held meetings with several industry players. Hosted by the GSMA, the MWC Barcelona...

HIPEAC Workshop: Mixed Critical Systems – Safe and Secure Intelligent CPS and the development cycle

This year the SAFEXPLAIN project will partake in this workshop lead by IKERLAN on mixed critical systems. The workshop will take place on 19 January from 10h-17:30h. The HiPEAC conference is the premier European forum for experts in computer architecture, programming...